The Project

Voice technology represents a paradigm shift in human-computer interaction—making it an extremely exciting field. CNBC was asked to be a launch partner for Amazon Alexa. CNBC viewed this as an opportunity to reach out to consumers via a constellation of new touchpoints from smart speakers, touchscreen devices, apps, and voice-enabled smart TVs.

The initial hurdles were formidable. No one on our team had any experience building voice applications. Furthermore, voice technology spanned a gamut of devices from small screens to TVs to devices with no screen at all. Amazon provided extensive support including educating the team on best practices for Voice User Interface (VUI) design, technical expertise as well as the API that powered the application.

Team Role

Working with a Product Owner, a technical lead and a support team, and developers and QA engineers, I was responsible for designing CNBC Skill Voice User Interface (VUI) as well as the complementary Graphical User Interface (GUI). Each required siloed information architecture, defined user task flows, wireframes, and prototypes which were developed in tandem and required extensive documentation. I also conducted user research using methods such as interviews, surveys, intercepts, and participatory design sessions to ascertain user behavior for a technology that wasn't widely adopted.

Design Process

In order to identify the value proposition for such devices, as well as what is needed to accomplish, we conducted numerous user interviews and competitive analyses of other voice applications that were already on the market. We also conducted a number of moderated usability tests utilizing Wizard of Oz methodology in order to better understand user behavior. After compiling the data, we were able to identify their key pain points: up to the minute information, the convenience of interacting with a device hands-free, and the ability to multitask. Although we knew the app could grow to be something much larger and more robust, we focused on creating an MVP that met the user’s core needs.

After a number of brainstorming sessions, the team was able to identify three personas: the active investor, the retired investor, and the hobbyist. The personas served as models to construct the dialogs and to define the personality and speaking style of the skill.

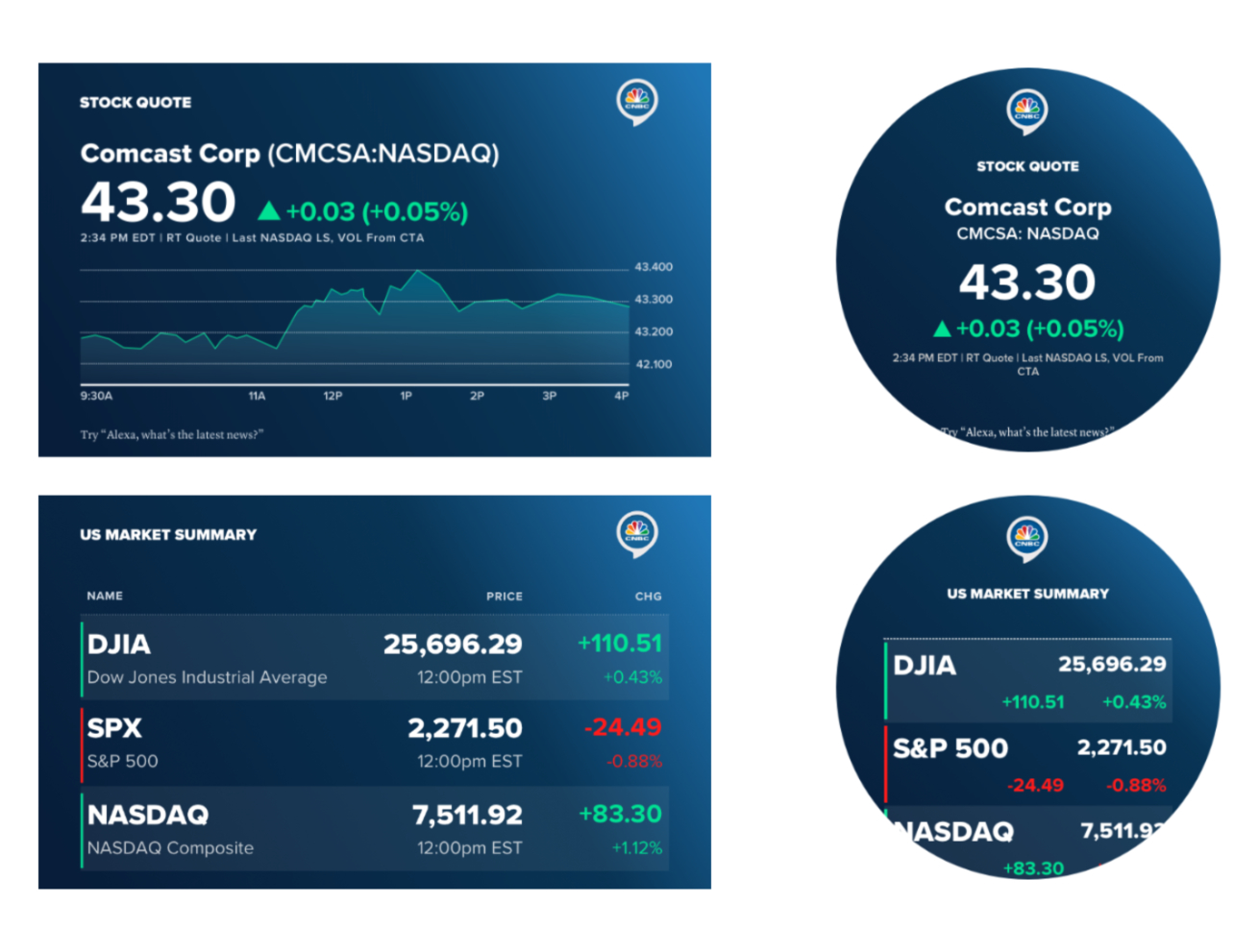

Interface Design

After having defined the interaction model, it was time to construct the user flow diagrams. Visualizing the structure of each function helped the team to identify opportunities for improvement, as well as reducing the number of steps in a dialog to reduce the cognitive load on the user.

While creating the flows, I began working on an interaction script that simulates an interaction between the app and the user. In order to construct a meaningful dialog, we had to understand what the user asked for which was called the 'utterance' and how the skill responded to an utterance which was called the 'intent.' This is a sample turned based dialog:

User: Alexa, open CNBC

CNBC: Welcome to CNBC. You can ask for the news, market updates, or other market info. What would you like to hear?

User: Check on the US Markets

CNBC: As of the U.S. market close, the DOW was down 1.69 percent at 573 points, the NASDAQ Composite was down 2.31 percent at 306 points, and the S&P 500 was down 1.73 percent at 74.50 points.

CNBC: Anything else?

User: No

CNBC: Thanks for talking to CNBC

The Outcome

CNBC realized that the innate value proposition of voice search is that it is quick, convenient, and helpful would give it an opportunity to form deeper relationships with consumers. As the technology matures and gains further adoption, it will open even more opportunities to connect with consumers. However, we learned that this potential for success was built on a base of extensive research and with the understanding that each platform was not an isolated channel but part of a larger constellation of experiences.